Adding Luzmo IQ to your agentic workflow

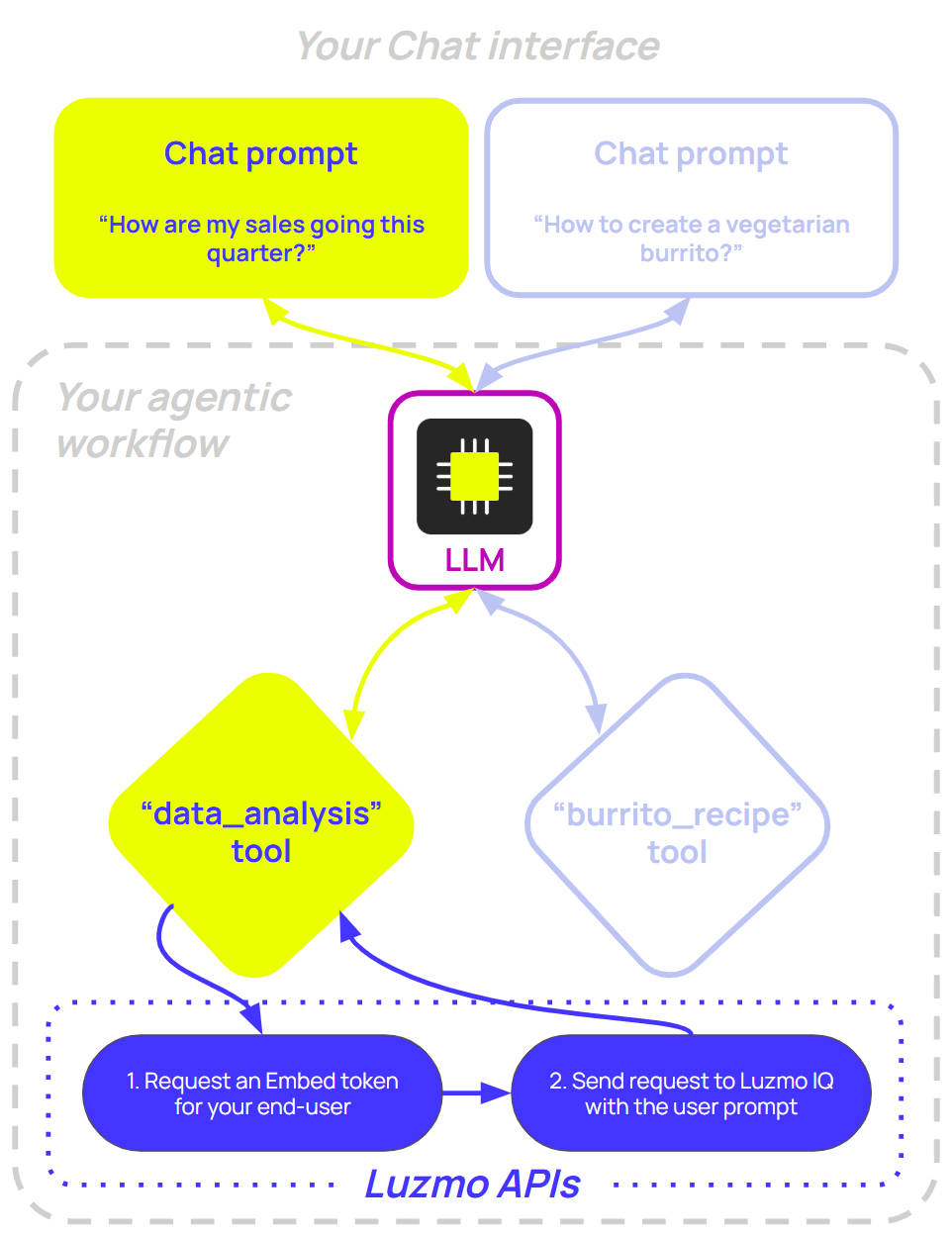

In this guide, we'll show you how to give any LLM agent the ability to query your analytics data using Luzmo's IQMessage REST API. The idea is simple: expose Luzmo IQ as a callable tool, and let the LLM decide when it needs to analyze data to answer a user's question.

This pattern works with any agent framework - raw OpenAI / Claude /... tool/function calls, LangChain , LangGraph , Deep agents , n8n , or any other setup that supports function/tool calling.

Note: Luzmo IQ is available as an add-on. If you'd like to start using or testing it, please reach out to your Luzmo contact person or support@luzmo.com . You'll also need a valid API key to create Embed Authorization tokens (requested server-side based on your application's logged-in user) for authenticating IQMessage requests.

Your agent loop stays the same, regardless of the framework you use:

A user asks a question

The LLM decides whether it needs data and calls your

data_analysistoolYour

data_analysistool implementation sends the prompt to Luzmo's IQMessage API and returns the result to the LLMThe LLM formulates its final answer

If you're looking for ready-to-use example code, start with one of these:

| Framework | Example implementations |

|---|---|

| OpenAI | TypeScript & Python |

| LangChain | TypeScript & Python |

| LangGraph | TypeScript & Python |

| Deep Agents | TypeScript & Python |

| n8n | Workflow JSON |

The IQMessage endpoint

Every call is a POST to the IQMessage endpoint with a JSON body. The request uses an Embed key-token pair for user authentication and authorization (e.g. access to IQ, which datasets are accessible, which multitenancy should be applied on the queries, etc.). The only required property to specify is prompt - the natural-language question you want IQMessage to answer. Everything else, including response_mode and locale_id , is optional, but setting the response_mode is recommended (see further below for details).

curl -X POST https://api.luzmo.com/0.1.0/iqmessage \

-H "Content-Type: application/json" \

-d '{

"action": "create",

"version": "0.1.0",

"key": "<your-embed-key>",

"token": "<your-embed-token>",

"properties": {

"prompt": "How are quarterly sales trending?",

"response_mode": "text_only",

"locale_id": "en"

}

}'Endpoints:

EU multitenant:

https://api.luzmo.com/0.1.0/iqmessageUS multitenant:

https://api.us.luzmo.com/0.1.0/iqmessageVPC-specific:

https://vpc-specific-api-url/0.1.0/iqmessage

The response streams back as JSONL - each line is a JSON object. Concatenate every chunk field value to build the full answer.

Response modes

IQMessage supports three response modes, which you control with the response_mode property in your request. In an agentic context you might want to use text_only , so the LLM receives a clean string it can reason about and pass on to the user.

text_only- IQMessage only returns a plain text answer . The LLM can read, summarise, or reason about it. Best for agentic use cases where the LLM is composing the final response.mixed(default) - IQMessage can return both a textual answer and a Flex chart configurations , depending on the question. Useful when you want to surface chart data to your own UI alongside the text.You can render the full response using our Answer component , or in your own interface together with the Flex SDK (to render any generated chart configurations).

chart_only- IQMessage only returns a Flex chart configurations, no text . Useful if you want to programmatically embed a generated chart without accompanying prose.You can render these charts using our Flex SDK .

Core integration flow

Use the steps below to wire Luzmo IQ into an (existing) agent workflow, from tool setup to returning the final result to your LLM. The Luzmo IQ tool call flow will look as follows:

Receive the tool arguments

Request an Embed key-token pair for that user

Call IQMessage with that Embed key-token pair

Return the result to the LLM

Defining the tool

Here's the tool schema to register with your LLM. It works with OpenAI, Anthropic, and any API that follows the function-calling spec. Add this object to your tools array, alongside any other tools your agent might need:

{

"type": "function",

"function": {

"name": "data_analysis",

"description": "Query analytics data. Use for questions about metrics, KPIs, dashboards, trends, or business performance.",

"parameters": {

"type": "object",

"properties": {

"prompt": { "type": "string", "description": "The data question to answer" },

"response_mode": {

"type": "string",

"enum": ["mixed", "text_only", "chart_only"],

"description": "Response type: mixed (text and/or chart), text_only, or chart_only. Defaults to mixed if omitted."

},

"locale_id": {

"type": "string",

"description": "Locale/language for the answer (e.g. en, fr). Defaults to en if omitted."

}

},

"required": ["prompt"]

}

}

}The description is important - the LLM uses it to decide when to call the tool. Be specific about the kinds of questions it should handle to ensure optimal tool usage!

Requesting an Embed Authorization token

When the LLM decides to call your data_analysis tool, your backend should first request an Embed Authorization token on behalf of the currently logged-in user in your application.

That token is what defines what IQ can access and how access is scoped:

It should grant access to the datasets needed to answer the request using the

accessproperty (through one or more collections, and/or directly to one or more datasets).Make sure to also grant access to any dashboard(s) your end-user should have access to (similar to datasets, either through a collection or directly to the dashboard) - this will ensure that any scheduled resources (e.g. exports, alerts) on these dashboards still continue to work.

It should include your multitenancy restrictions , so queries are automatically scoped to the necessary tenant/account/user configuration (e.g. Embed filters and/or Connection overrides ).

Optionally use

iq.contextto add a master prompt that steers Luzmo IQ (e.g. response format, terminology).

For all properties, see the Create Authorization API documentation .

Luzmo API key and token required. The examples below use your Luzmo API key-token pair to create Embed tokens. Create one in Luzmo profile settings and pass it via environment variables such as LUZMO_API_KEY and LUZMO_API_TOKEN .

import Luzmo from '@luzmo/nodejs-sdk';

const luzmoClient = new Luzmo({

api_key: process.env.LUZMO_API_KEY!,

api_token: process.env.LUZMO_API_TOKEN!,

host: 'https://api.luzmo.com'

});

async function requestEmbedToken(

userId: string,

name: string,

email: string,

suborganization: string

): Promise<{ embedKey: string; embedToken: string }> {

const response = await luzmoClient.create('authorization', {

type: 'embed',

username: userId,

name,

email,

suborganization,

access: {

collections: [{ id: '<collection_id>', inheritRights: 'use' }],

datasets: [{ id: '<dataset_id>', rights: 'use' }],

dashboards: [{ id: '<dashboard_id>', rights: 'use' }]

},

iq: {

context: `This is a master prompt you can use to steer Luzmo IQ in specific directions.

For example:

- Use a different response format (e.g. bullet points, tables)

- Add customer-specific details

- Enforce terminology

- Include confidence levels

These instructions apply to all IQMessage requests made with this token.`

}

});

return { embedKey: response.id, embedToken: response.token };

}Calling IQMessage

The function below sends a prompt to IQMessage and reads back the streamed JSONL response:

async function handleIqMessage(

prompt: string,

embedKey: string,

embedToken: string,

response_mode: "mixed" | "text_only" | "chart_only" = "mixed",

locale_id: string = "en"

): Promise<string> {

const response = await fetch("https://api.luzmo.com/0.1.0/iqmessage", {

method: "POST",

headers: { "Content-Type": "application/json" },

body: JSON.stringify({

action: "create",

version: "0.1.0",

key: embedKey,

token: embedToken,

properties: { prompt, response_mode, locale_id }

})

});

if (!response.ok) throw new Error(`IQMessage error: ${response.status}`);

let result = "";

const reader = response.body!.getReader();

const decoder = new TextDecoder();

let buffer = "";

// Stream is JSONL: read until done; buffer handles lines split across chunks.

while (true) {

const { done, value } = await reader.read();

if (done) break;

buffer += decoder.decode(value, { stream: true });

const lines = buffer.split("\n");

buffer = lines.pop() ?? "";

for (const line of lines) {

try {

const evt = JSON.parse(line.trim());

if (evt.chunk) result += evt.chunk;

} catch {

// Ignore non-JSON lines (e.g. keepalive, empty or malformed).

}

}

}

return result || "(No data returned)";

}The agent loop

OpenAI API key required. The examples below use the OpenAI API. Ensure you have an API key (e.g. from platform.openai.com ) and pass it when creating the client—typically via an environment variable such as OPENAI_API_KEY .

With the tool defined and implemented, plug it into your agent loop. Request the Embed token only when handling a data_analysis tool call:

A complete, runnable example is available in the openai directory of our example repository (TypeScript and Python).

import OpenAI from "openai";

const openai = new OpenAI({ apiKey: process.env.OPENAI_API_KEY });

const messages = [

{ role: "system", content: "You help users with data questions. Use data_analysis for analytics." },

{ role: "user", content: userQuestion }

];

const tools = [

..., // Your other tool definitions

{

type: "function",

function: { name: "data_analysis", ... }, // See `data_analysis` schema above

}

];

// Loop until the model responds without tool calls, or hit max rounds (safety cap).

for (let i = 0; i < 10; i++) {

const response = await openai.chat.completions.create({

model: "gpt-4o-mini",

messages,

tools,

tool_choice: "auto"

});

const choice = response.choices[0];

// No tool calls means the model has the final answer; return it.

if (!choice.message.tool_calls?.length) {

return choice.message.content;

}

messages.push(choice.message);

for (const tc of choice.message.tool_calls) {

let result: string;

if (tc.function.name === "data_analysis") {

// First request a Luzmo Embed Authorization token for your end-user

const { embedKey, embedToken } = await requestEmbedToken(

currentUserId,

currentUserName,

currentUserEmail,

currentUserSuborg

);

// Then send the prompt to the Luzmo IQ API to perform a data analysis

const args = JSON.parse(tc.function.arguments);

result = await handleIqMessage(

args.prompt,

embedKey,

embedToken,

args.response_mode ?? "mixed",

args.locale_id ?? "en"

);

} else {

// Handle other tools as needed

result = `(Tool ${tc.function.name} not implemented in this example)`;

}

messages.push({ role: "tool", tool_call_id: tc.id, content: result });

}

}Framework implementations

LangChain

LangChain lets you register data_analysis as a first-class tool and hand it straight to a LangChain agent graph - no manual message loop required. LangChain handles the tool-call / tool-result round-trips for you, and you get built-in support for memory, callbacks, and tracing on top.

A complete, runnable example is available in the langchain directory of our example repository (TypeScript and Python).

import { DynamicStructuredTool } from "@langchain/core/tools";

import { createAgent } from "langchain";

import { ChatOpenAI } from "@langchain/openai";

import { z } from "zod";

const dataAnalysisTool = new DynamicStructuredTool({

name: "data_analysis",

description: "Query analytics data for metrics, KPIs, and business performance.",

schema: z.object({

prompt: z.string().describe("The data question to answer."),

response_mode: z

.enum(["mixed", "text_only", "chart_only"])

.optional()

.default("mixed")

.describe("Response type: mixed (text and/or chart), text_only, or chart_only."),

locale_id: z

.string()

.optional()

.default("en")

.describe("Locale/language for the answer (e.g. en, fr).")

}),

func: async ({ prompt, response_mode, locale_id }) => {

const { embedKey, embedToken } = await requestEmbedToken(

currentUserId,

currentUserName,

currentUserEmail,

currentUserSuborg

);

return handleIqMessage(prompt, embedKey, embedToken, response_mode ?? "mixed", locale_id ?? "en");

}

});

const llm = new ChatOpenAI({ model: "gpt-4o-mini" });

const agent = createAgent({

model: llm,

tools: [dataAnalysisTool],

systemPrompt: "You help users with data questions. Use data_analysis for analytics."

});

const result = await agent.invoke({ messages: [{ role: "user", content: "What were last month's sales?" }] });

console.log(result.messages.at(-1)?.content);If you prefer a graph-based orchestration style with explicit state and conditional edges, use LangGraph below.

LangGraph

If you're using LangGraph, wrap the IQMessage call as a tool and pass it to create_react_agent (Python) or createReactAgent (JavaScript). LangGraph is only available for Python and JavaScript; for other languages, use the agent loop pattern above.

A complete, runnable example is available in the langgraph directory of our example repository (TypeScript and Python).

import { tool } from "@langchain/core/tools";

import { createReactAgent } from "@langchain/langgraph/prebuilt";

import { ChatOpenAI } from "@langchain/openai";

import { z } from "zod";

const dataAnalysis = tool(

async ({ prompt, response_mode, locale_id }) => {

const { embedKey, embedToken } = await requestEmbedToken(

currentUserId,

currentUserName,

currentUserEmail,

currentUserSuborg

);

return handleIqMessage(

prompt,

embedKey,

embedToken,

response_mode ?? "mixed",

locale_id ?? "en"

);

},

{

name: "data_analysis",

description: "Query analytics data for metrics, KPIs, and business performance.",

schema: z.object({

prompt: z.string().describe("The data question to answer."),

response_mode: z

.enum(["mixed", "text_only", "chart_only"])

.optional()

.default("mixed")

.describe("Response type: mixed (text and/or chart), text_only, or chart_only."),

locale_id: z

.string()

.optional()

.default("en")

.describe("Locale/language for the answer (e.g. en, fr).")

})

}

);

const llm = new ChatOpenAI({ model: "gpt-4o-mini" });

const agent = createReactAgent({ llm, tools: [dataAnalysis] });

const result = await agent.invoke({

messages: [{ role: "user", content: "What were last month's sales?" }]

});Deep agents

Deep agents (Python) and Deep agents (TypeScript) provide an agent harness with built-in planning, file systems, and subagent support. Register data_analysis as a tool and pass it to create_deep_agent (Python) or createDeepAgent (TypeScript). Deep agents is only available for Python and JavaScript; for other languages, use the agent loop pattern above.

A complete, runnable example is available in the deepagents directory of our example repository (TypeScript and Python).

import * as z from "zod";

import { createDeepAgent } from "deepagents";

import { tool } from "langchain";

const dataAnalysis = tool(

async ({ prompt, response_mode, locale_id }) => {

const { embedKey, embedToken } = await requestEmbedToken(

currentUserId,

currentUserName,

currentUserEmail,

currentUserSuborg

);

return handleIqMessage(

prompt,

embedKey,

embedToken,

response_mode ?? "mixed",

locale_id ?? "en"

);

},

{

name: "data_analysis",

description: "Query analytics data for metrics, KPIs, and business performance.",

schema: z.object({

prompt: z.string().describe("The data question to answer."),

response_mode: z

.enum(["mixed", "text_only", "chart_only"])

.optional()

.default("mixed")

.describe("Response type: mixed (text and/or chart), text_only, or chart_only."),

locale_id: z

.string()

.optional()

.default("en")

.describe("Locale/language for the answer (e.g. en, fr).")

})

}

);

const agent = createDeepAgent({

tools: [dataAnalysis],

system: "You help users with data questions. Use data_analysis for analytics.",

});

const result = await agent.invoke({

messages: [{ role: "user", content: "What were last month's sales?" }]

});n8n

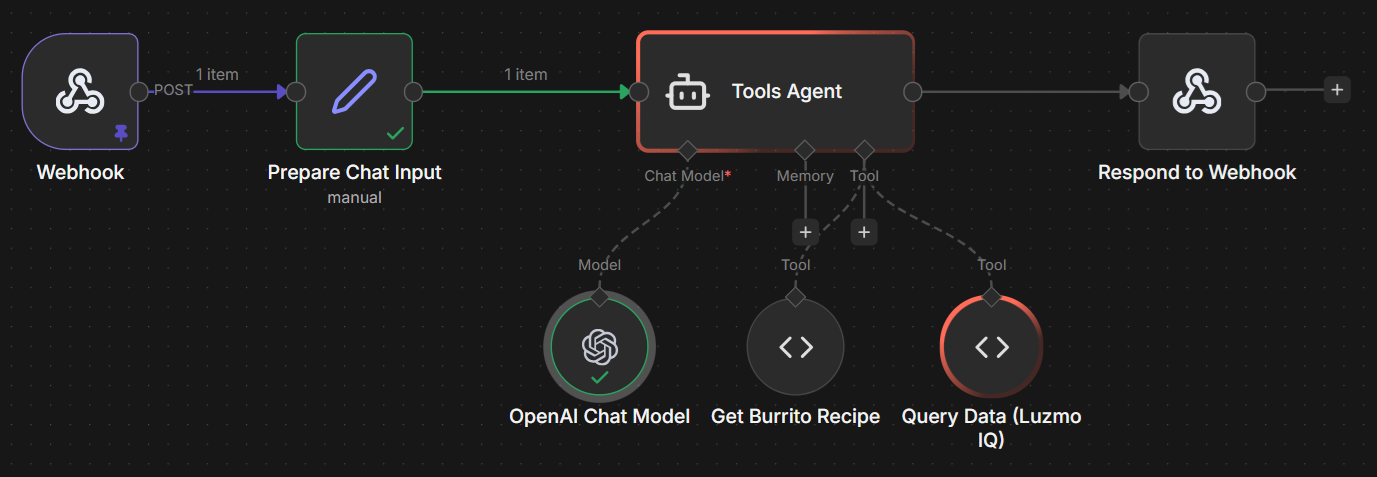

In n8n, a Tools Agent node sits at the centre of the workflow. It is connected to a chat model (e.g. OpenAI Chat Model ) and one or more tool nodes. One of those tools is a Custom Code Tool named data_analysis — when the agent decides a question requires analytics data, it calls this tool, which sends the prompt to the Luzmo IQMessage API and returns the answer as plain text.

A complete, importable workflow like shown in the image above is available in the n8n directory of our example repository.

Give the tool a clear description so the agent knows when to invoke it:

Name:

data_analysisDescription:

Query analytics/business data via Luzmo. Use when the user asks about sales, data, metrics, dashboards, charts, or business intelligence.

Then add the following JavaScript in the node's code field:

const prompt = typeof query === 'string' ? query : (query?.prompt || '');

const responseMode =

typeof query === 'object' && query?.response_mode ? query.response_mode : 'text_only';

const localeId = typeof query === 'object' && query?.locale_id ? query.locale_id : 'en';

const key = $json.luzmoEmbedKey;

const token = $json.luzmoEmbedToken;

const host = $json.luzmoApiHost;

if (!key || !token || !host) {

return 'Error: Missing Luzmo request properties. Required: luzmoEmbedKey, luzmoEmbedToken, luzmoApiHost.';

}

try {

const response = await this.helpers.httpRequest({

url: `${host}/0.1.0/iqmessage`,

method: 'POST',

headers: { 'Content-Type': 'application/json' },

body: {

action: 'create',

version: '0.1.0',

key,

token,

properties: { prompt, response_mode: responseMode, locale_id: localeId },

},

encoding: 'text',

returnFullResponse: true,

});

const status = response?.statusCode ?? response?.status ?? 200;

const rawText =

typeof response?.body === 'string'

? response.body

: typeof response?.data === 'string'

? response.data

: '';

if (status >= 400) {

return `Luzmo API error ${status}: ${rawText || '(empty error body)'}`;

}

let result = '';

for (const line of rawText.split('\n')) {

const clean = line.trim();

if (!clean) continue;

try {

const evt = JSON.parse(clean);

if (evt.chunk != null) result += String(evt.chunk);

} catch (_) {

// Ignore malformed/non-JSON lines.

}

}

return result || '(No data returned)';

} catch (err) {

const status = err?.response?.statusCode ?? err?.response?.status ?? 'unknown';

const body =

typeof err?.response?.body === 'string'

? err.response.body

: typeof err?.message === 'string'

? err.message

: JSON.stringify(err);

return `Luzmo API error ${status}: ${body}`;

} The Embed key-token pair and API host must be available in the workflow context before the agent runs — pass them in from your trigger or a preceding Set node (e.g. luzmoEmbedKey , luzmoEmbedToken , luzmoApiHost ). For API hosts, use https://api.luzmo.com (EU multitenant), https://api.us.luzmo.com (US multitenant), or your VPC-specific host.

Next Steps

IQMessage API reference : full request/response schema

Generating an Embed Authorization token : how to create and scope embed tokens

Luzmo IQ API introduction : broader overview of the IQ API

Creating an IQ Chat component : if you also want a ready-made chat UI alongside your agent